I said a while back that nobody’s going to Mars any time soon. Which is true. But that doesn’t mean Mars isn’t interesting! Mars is very interesting.

So today’s paper is about Mars. Okay, it’s about a moon of Mars.

TLDR: one of Mars’ moons may periodically tear itself apart, turn into a system of rings around the planet, and then put itself back together.

You may recall that Mars has two small moons, Deimos and Phobos. Emphasis on small; they’re about 12 km and 20 km across, respectively. They’re so small that their weak gravity doesn’t pull them into spheres. They’re both irregular lumps, vaguely potato-shaped.

Now we have to take a step back and talk a little bit about the physics of moons.

You’ve probably heard of geosynchronous orbits. There’s a particular distance from the Earth — it’s about 40,000 kilometers — where a satellite will take exactly 24 hours to complete one orbit. Mars rotates much like Earth, so there are geosynchronous (1) orbits around Mars too.

So an interesting fact about moons: if a moon orbits above geosynchronous orbit, it will tend to very slowly spiral outwards, raising its orbit and moving further away from its planet. (2) (“Very slowly” here means over billions of years.) Our own Moon is doing this, drifting away a few centimeters per year. Contrariwise, if a moon orbits /below/ geosynchronous orbit, it will tend to spiral /inward/, gradually getting closer to its planet.

Furthermore: the speed with which a moon’s orbit changes depends on the distance from the planet. So if a moon is drifting outwards, that drift will gradually become slower as it gets further away. It will never stop entirely, but it will slow down so much that the moon’s orbit will be stable over astronomical time — billions or tens of billions of years.

But if a moon is drifting inwards? Then as its orbit gets lower, the inward drift will accelerate, lowering the orbit even faster. It’s a positive feedback loop. Which is not going to end well for the moon.

“Hm,” you may ask yourself, “so if close-in moons tend to spiral inwards towards the planet, faster and faster… there probably aren’t a lot of close-in moons?” And that’s exactly right! There are (at the moment) 467 known moons in the Solar System. Only six of them are below their planet’s geosynchronous orbit.

So what happens as a moon spirals inward? Does it crash into the planet?

As it turns out, no. When a moon gets too close to its planet, tidal forces begin to tear the moon apart. The point where this happens is called the “Roche Limit“, and it’s not a fixed distance — it depends on a bunch of things like the size of the planet, size of the moon, density of the moon, and what the moon is made of. But wherever it is, if a moon hits the Roche limit, well…

[don’t stand]

[don’t stand so]

[don’t stand so]

[close to me]

The moon gets torn to shreds, and the shreds form rings. This is (we think) how planets get rings around them. Current thinking is that Saturn’s rings, for instance, probably originated with a now-extinct moon with the excellent name of Chrysalis.

[and Saturn throws in that crazy hexagon at its north pole, just to flex]

Okay, so back to Phobos. Phobos is orbiting about 2.7 Martian radii from the center of Mars. The Roche limit for a solid object is about 1.6 radii. It’s expected that Phobos will hit that limit in about 40 million years, give or take. It will then be pulled apart and destroyed. And Mars will get a lovely set of rings!

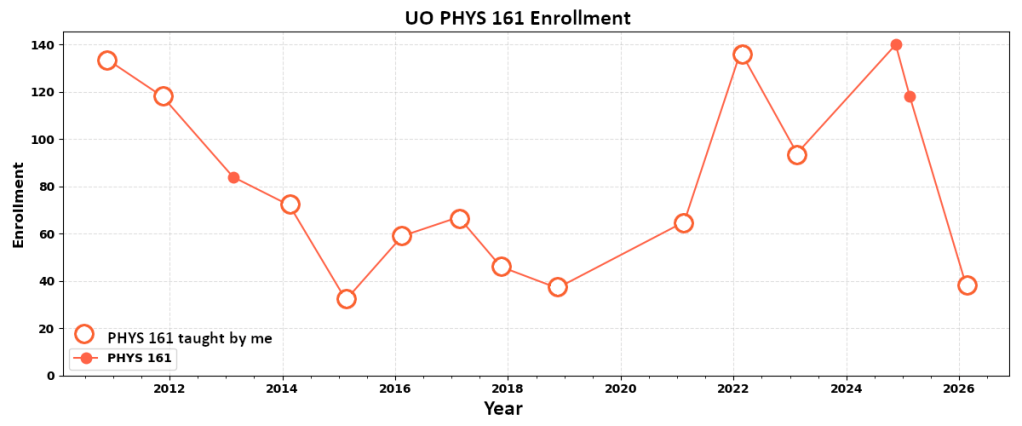

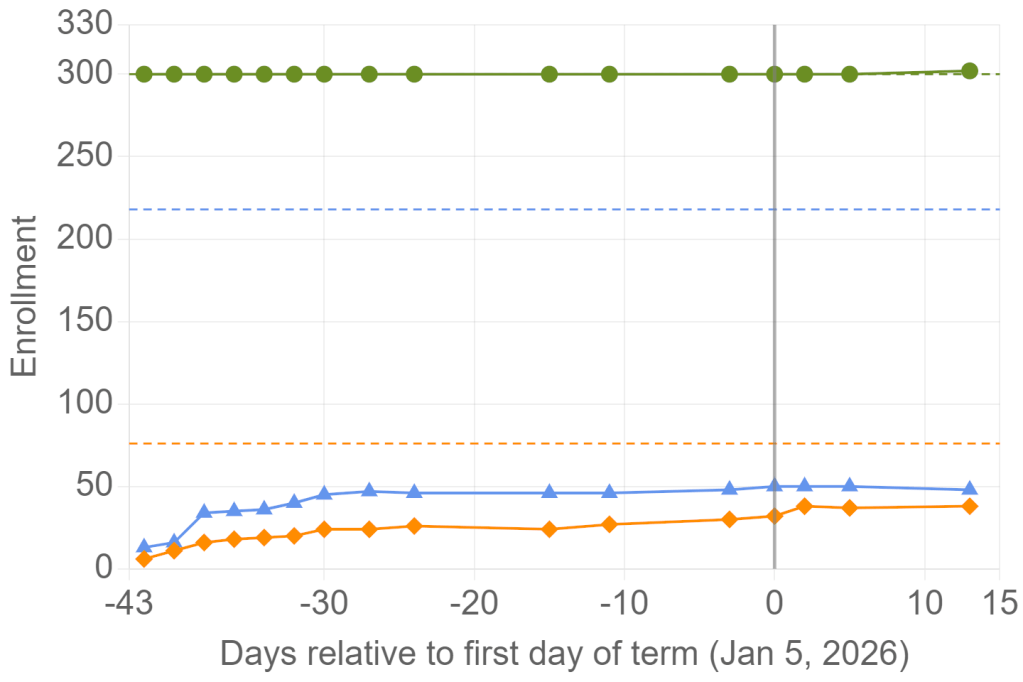

Which, okay, but… the Solar System is about 4.5 billion years old. Phobos is scheduled for destruction in 40 million years. That’s less than one percent of the lifetime of the Solar System. Isn’t it a bit of a coincidence that we should be seeing Phobos right now, just as it’s starting its death spiral?

(It’s true that we’re seeing a couple of other moons doing this at Jupiter and Neptune. But those are giant planets that have ridiculous numbers of moons — Jupiter has over 100. And their gravitational fields are so large and strong that they regularly capture new moons from wandering asteroids and such. So a moon in a decaying orbit around Jupiter is not exactly a surprise.)

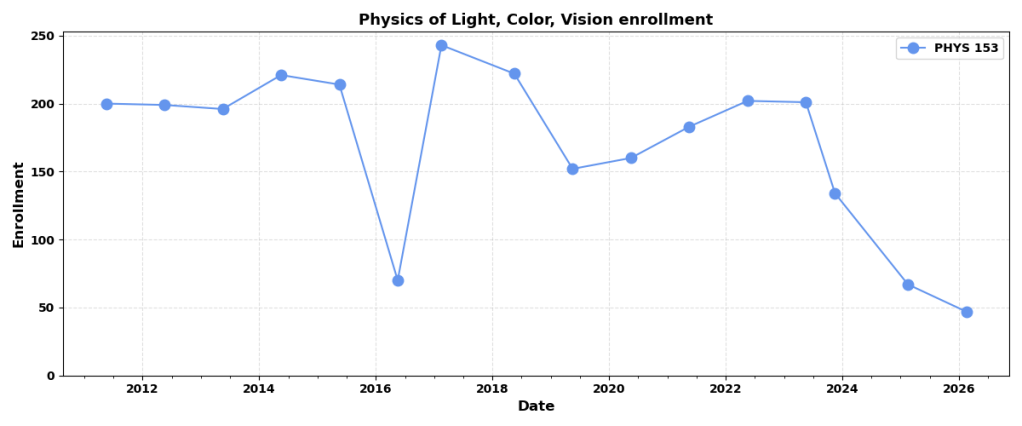

But okay, so Mars will have rings one day. Here’s a thing about rings: they don’t last. Over geological time, they tend to widen, spreading inwards and outwards. (3)

Eventually, the innermost ring particles hit the planet’s atmosphere and either burn up or crash. Meanwhile the outermost ring particles drift outwards until the ring is attenuated into nothing. This process can be delayed or complicated by the presence of other moons — Saturn famously has a bunch of “shepherd moons” constraining its rings — but the point here is, rings don’t last forever.

[well, they don’t]

So a while back someone had a crazy idea: what if, after Phobos breaks up into a ring, some of the ring particles disperse outwards and drift far enough from the Roche limit to re-coalesce? Their mutual gravity would be very weak, sure. But over millions of years, maybe they could gradually recombine into a new moon! One outside the Roche limit!

The new moon would be smaller, of course — at least half of Phobos’ mass would be lost. But while Phobos is pretty small for a moon, it’s still about ten trillion tons. Cut Phobos in half and you’ve still got a moon.

Alas, the math didn’t quite work. Phobos’ Roche limit was too low. Most of its mass would fall onto Mars. Not enough ring material would climb high enough to form a new moon.

And there the matter rested for a bit, until this latest paper. Which asks the question: well, what if Phobos isn’t a solid object? What if it’s a rubble pile?

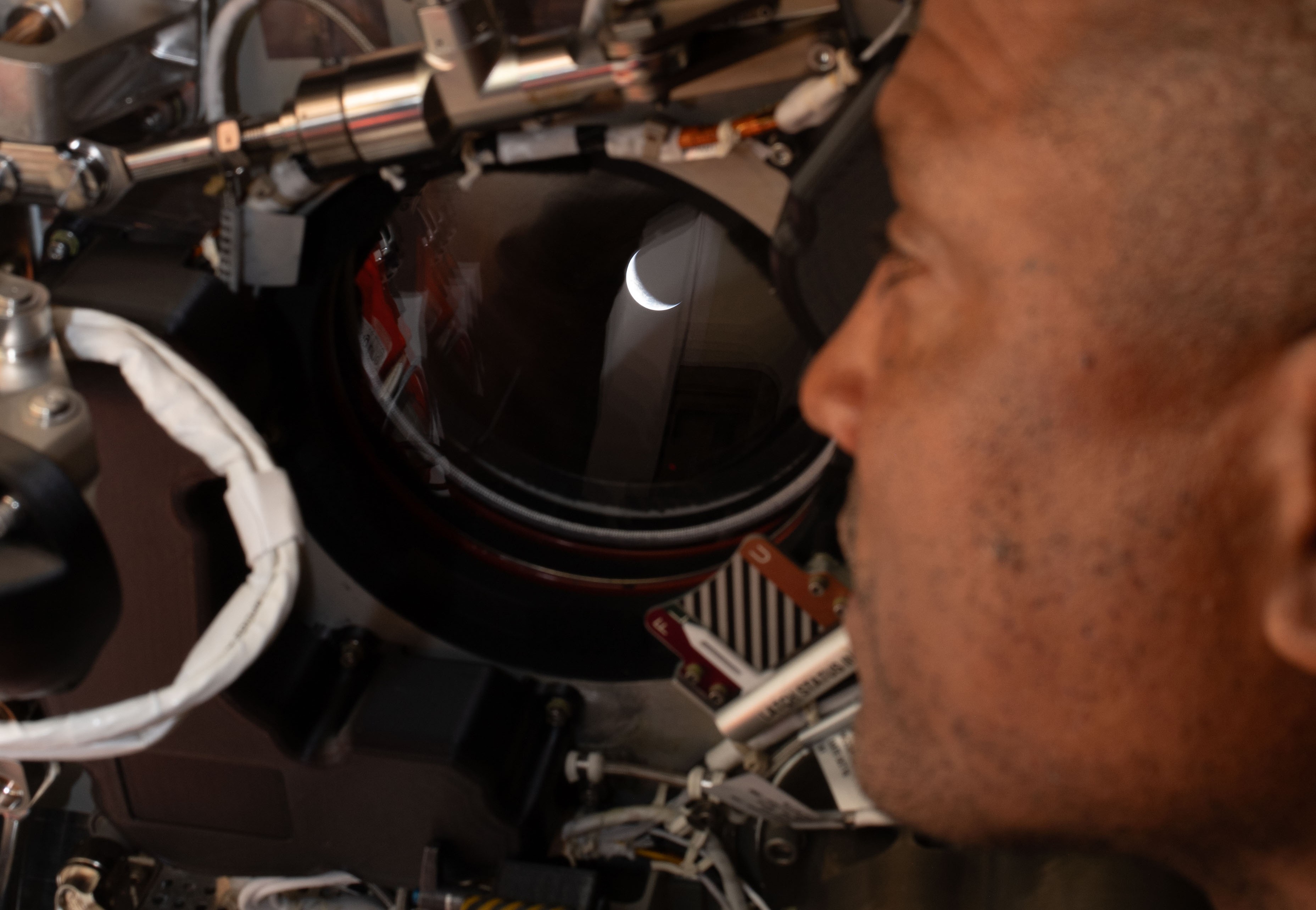

See, in the last little while we’ve been sending probes to asteroids. And while asteroids all look pretty solid from a distance, when you get closer? Turns out a lot of them aren’t solid at all. They’re just big floating piles of rocks sand and gravel, very loosely held together by weak gravity.

[everybody looks a bit rougher in close-up]

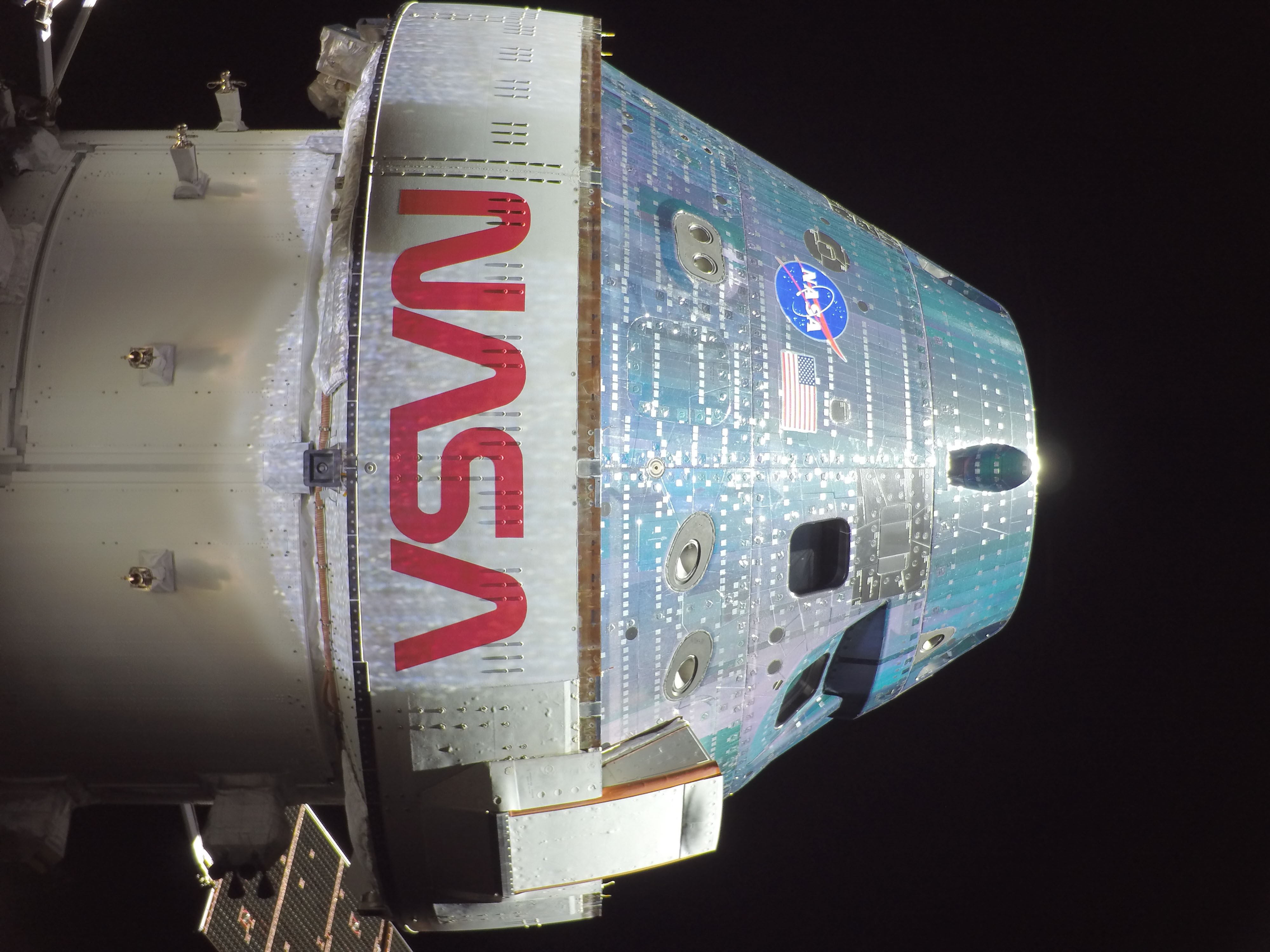

You remember the DART mission a little while back? It’s when NASA blasted the hell out of a small asteroid, because it was cool. I mean, sorry, because for planetary defense and also science.

[we tried negotiating with the so-called “moderate” asteroids]

Well, that impact didn’t just hit the small asteroid. It literally blew half of it off into space. Because that little asteroid was actually a rubble pile. So the DART impact was a bit more… impactful, than expected.

[pretty much this, yeah]

Which brings us back to today’s paper! Because if asteroids can be rubble piles, why not small, asteroid-sized moons as well?

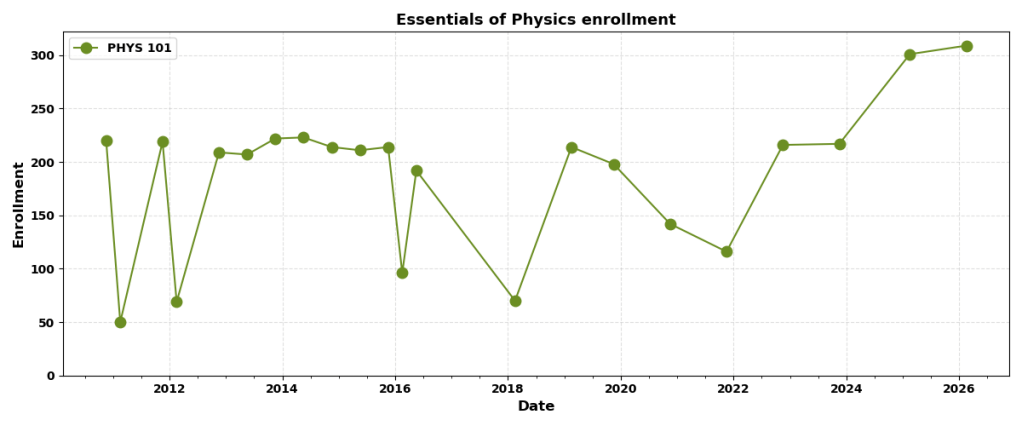

And it turns out that if Phobos is a rubble pile, everything changes. Because then the Roche Limit will be higher — further out from Mars. Because it’s much easier to tear apart a rubble pile than a solid object, yes? And if that’s the case, then Phobos will die sooner than we think, and the ring system that it produces will start higher, and will spread out further away from Mars.

And if that’s the case, then… suddenly the math works. Enough ring material will be high enough to re-combine into a smaller moon well outside the Roche limit. But that moon will still be sub-geosynchronous, so it will start spiraling inwards again. And so, over tens to hundreds of millions of years, the cycle will repeat.

It won’t be able to repeat forever, because Phoenix Phobos will be smaller every time. Eventually there won’t be enough ring material to produce a moon. But it could potentially continue for several more cycles.

And extending it backwards into the past… yeah. Maybe Phobos used to be a lot bigger! But maybe it’s been through several cycles already. Spiral inwards, hit the Roche Limit, break up into rings… rings spread out, inner part falls onto Mars, outer part recombines into a new, smaller version of Phobos… this could have been going on for a while now. And you’ll notice that this solves the “why are we seeing Phobos just as it’s dying” question. It’s not actually dying! Sometimes Mars has two moons; sometimes it has one moon, and a pretty ring system.

If the dinosaurs had owned telescopes, they could have seen rings around Mars. Whatever intelligence inhabits Earth in 50 million AD (4) may see rings around Mars. Us? We just happen to be catching Mars and Phobos at this particular point in their cycle.

But wait! As a bonus… remember Deimos? The other, more distant moon? Well, if the rubble pile model is correct, then some ring material might eventually be captured by Deimos. So while Phobos would get smaller with every cycle, Deimos would get a little bit bigger. And also, Deimos should be covered in a thick layer of Phobos material.

Okay! Cool theory.

Is it true?

Well, we don’t know. But we might know pretty soon.

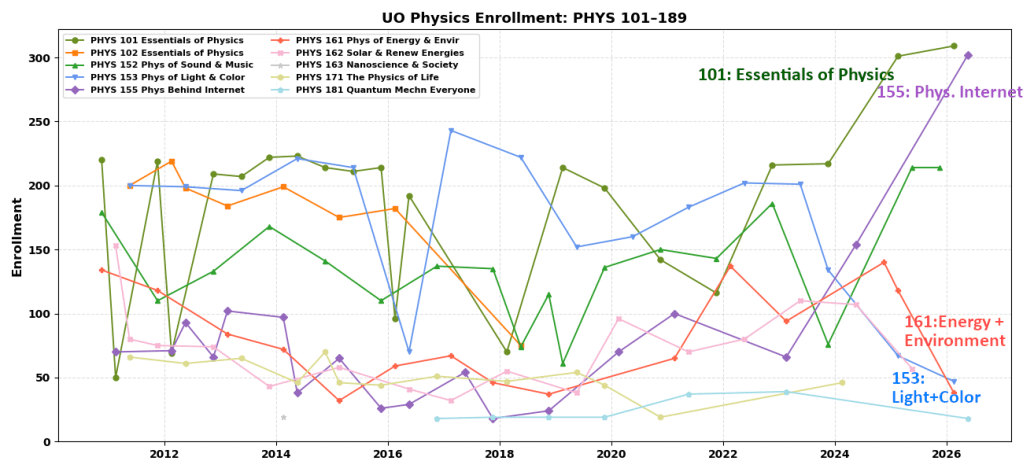

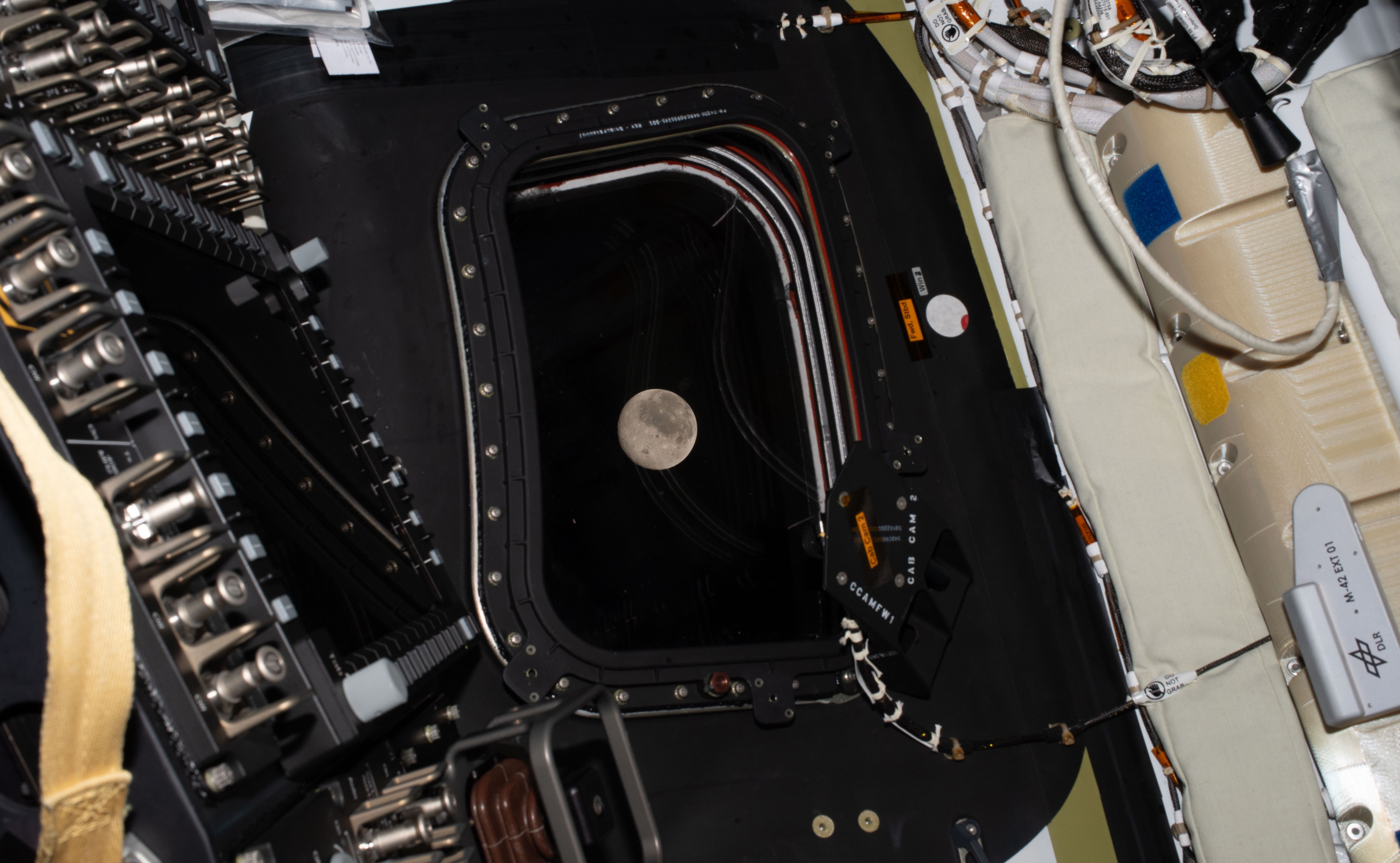

JAXA, the Japanese space agency, is planning to send a probe to Phobos. It’s scheduled to launch in the next Mars launch window, which is in November-December 2026. That would bring it to Mars orbit by September 2027, give or take. JAXA has only a fraction of NASA’s budget, but they have a pretty good track record of successfully sending probes to do cool science in space. Their Phobos probe will orbit Phobos, scan it with a bunch of instruments, and drop a rover onto the moon’s surface. Then it will swing in close and take a bite out of Phobos’ surface for a sample return to Earth. And then for an encore, on its way out the door, it will do a close flyby of Deimos as well.

[unironically, fingers crossed for this]

So — if all goes well — we’re going to learn much, much more about the moons of Mars. And we could have an answer to the “rubble pile or solid” question in the next couple of years.

And if the sample return succeeds… well, we’d have some stuff from another world, which is astonishing enough by itself. But not just any stuff. It would probably look like a handful of sand and gravel. But it might be sand and gravel that has spent the last couple of billion years cycling between being part of a moon, then part of a ring system around Mars, and then part of a moon again.

And that’s all.

(1) don’t be that guy

(2) because reasons

(3) because reasons

(4) probably raccoons.